In the physical science, a particle is a small localized object to which can be ascribed several physical properties such as volume or mass.

In particle physics, an elementary particle (or fundamental particle) is a particle not known to be made up of smaller particles. If an elementary particle truly has no substructure, then it is one of the basic building blocks of the universe from which all other particles are made.

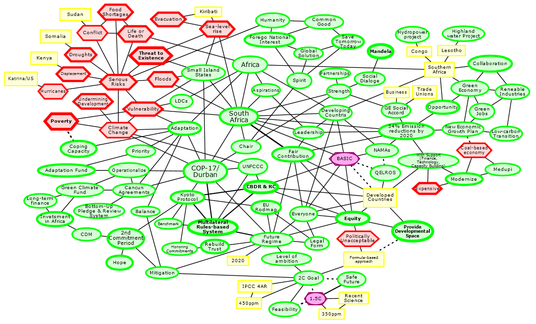

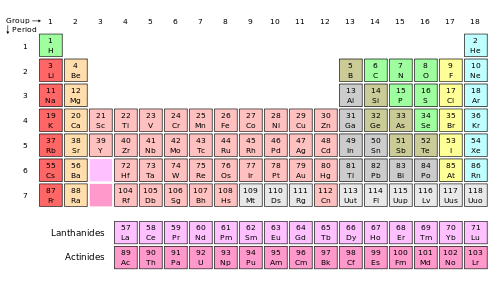

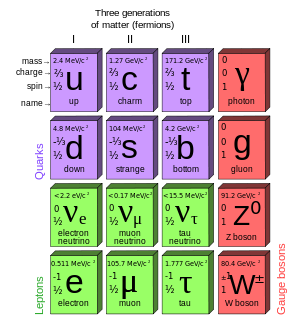

The Standard Model of particle physics has 61 particles :

- 2*3*3 (=18) quarks (fermions) with corresponding antiparticles (total 36)

- 2*3 (=6) leptons (fermions) with corresponding antiparticles (total 12)

- 1*8 gluons (bosons) without antiparticles (total 8)

- 1 W boson with one corresponding antiparticle (total 2)

- 1 Z boson without antiparticle (total 1)

- 1 photon (boson) without antiparticle (total 1)

- Higgs boson without antiparticle (total 1)

Standard model of particles (Wikipedia)

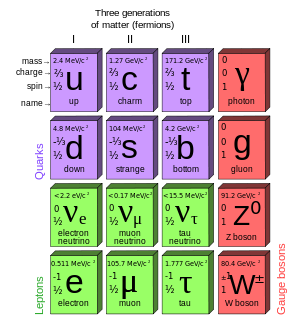

Quarks and leptons are fermions. According to the spin-statistics theorem, fermions respect the Pauli exclusion principle. Each fermion has a corresponding antiparticle.

Elementary fermions are matter particles, segmented as :

- 6 Quarks : up, down, charm, strange, top, bottom

- 3 Leptons with electrical charge : electron, muon, tau

- 3 Leptons without electrical charge : electron neutrino, muon neutrino, tau neutrino

Pairs from each classification are grouped together to form a generation, with corresponding particles exhibiting similar physical behavior.

Elemetary bosons are force-carrying particles, segmented as :

Gluons have 8 color charges.

The elementary fermions and bosons are represented in the following scheme :

Standard Model of elementary particles (Wikipedia)

The Higgs particle is a massive scalar elementary particle without intrinsic spin.

Additional elementary particles may exist, such as the graviton, which would mediate gravitation. Such particles lie beyond the Standard Model.

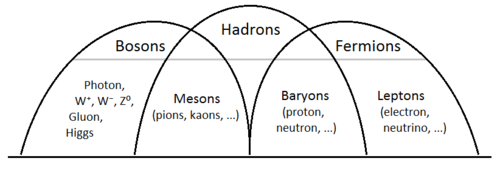

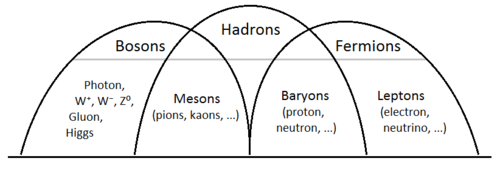

Composite particles are hadrons, made of quarks, held together by the strong interaction (also called strong force). Hadrons are categorized into two families: baryons and mesons. Baryons are hadrons and fermions, mesons are hadrons and bosons.

Baryons are made of three valence quarks. The best-known baryons are the proton and the neutron that make up most of the mass of the visible matter in the universe. Both form together the atom, a basic unit that consists of a dense central nucleus surrounded by a cloud of negatively charged electrons.

Each type of baryon has a corresponding antiparticle (antibaryon) in which quarks are replaced by their corresponding antiquarks. For example : just as a proton is made of two up-quarks and one down-quark, its corresponding antiparticle, the antiproton, is made of two up-antiquarks and one down-antiquark.

Mesons are hadronic subatomic particles composed of one quark and one antiquark, bound together by the strong interaction. Pions are the lightest mesons. A list of all mesons and a list of all particles are available at Wikipedia.

All particles of the Standard Model have been observed in nature, including the Higgs boson. Particles are described by the quantum field theory (quantum mechanics). String theory are an active research framework in particle physics that attempts to reconcile quantum mechanics and general relativity. String theory posits that the elementary particles within an atom are not 0-dimensional objects, but rather 1-dimensional oscillating lines (strings). A key feature of string theory is the existence of D-branes. There are different flavors of the string theory. The version that incorporates fermions and supersymmetry is called superstring theory.

An extension of the superstring theory is the M-theory in which 11 dimensions are identified. According to Stephen Hawking in particular, M-theory is the only candidate for a complete theory of the universe, the theory of everything (TOE), a self-contained mathematical model that describes all fundamental forces and forms of matter.