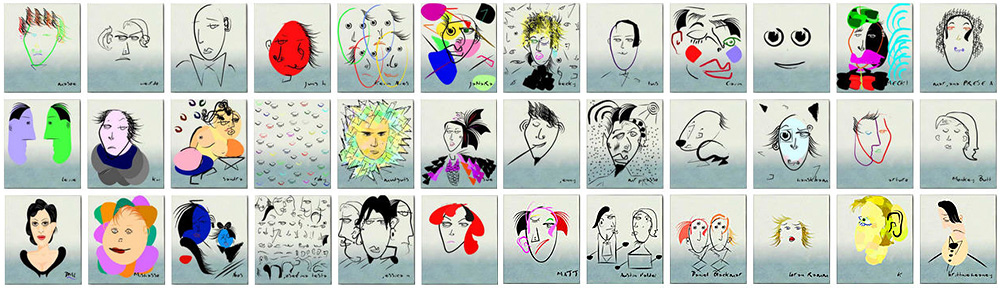

Cover of the Science Magazine January 14, 2011

Culturomics is a form of computational lexicology that studies human behavior and cultural trends through the quantitative analysis of digitized texts. The term was coined in December 2010 in a Science article called Quantitative Analysis of Culture Using Millions of Digitized Books. The paper was published by a team spanning the Cultural Observatory at Harvard, Encyclopaedia Britannica, the American Heritage Dictionary and Google. At the same time was launched the world’s first real-time culturomic browser on Google Labs.

The Cultural Observatory at Harvard is working to enable the quantitative study of human culture across societies and across centuries. This is done in three ways:

- Creation of massive datasets relevant to human culture

- Use of these datasets to power new types of analysis

- Development of tools that enable researchers and the general public to query the data

The Cultural Observatory is directed by Erez Lieberman Aiden and Jean-Baptiste Michel who helped create the Google Labs project Google N-gram Viewer. The Observatory is hosted at Harvard’s Laboratory-at-Large.

Logo of the Science Hall of Fame

Links to additional informations about Culturomics and related topics are provided in the following list :

- The Science Hall of Fame (SHoF; supporting site : fame.gonzolabs.org), by Adrian Veres and John Bohannon (Wikipedia)

- ARTstor Digital Library (more than one million artworks)

- Europeana (digital resources of European museums and galleries)

- The Digital Scriptorium (collections of medieval and renaissance manuscripts)