- Internet Cafés -> Internet am Ulyss

Blog Archives

Man-Computer Symbiosis

IRE Transactions on Human Factors in Electronics,

volume HFE-1, pages 4-11, March 1960

Summary

Man-computer symbiosis is an expected development in cooperative interaction between men and electronic computers. It will involve very close coupling between the human and the electronic members of the partnership. The main aims are 1) to let computers facilitate formulative thinking as they now facilitate the solution of formulated problems, and 2) to enable men and computers to cooperate in making decisions and controlling complex situations without inflexible dependence on predetermined programs. In the anticipated symbiotic partnership, men will set the goals, formulate the hypotheses, determine the criteria, and perform the evaluations. Computing machines will do the routinizable work that must be done to prepare the way for insights and decisions in technical and scientific thinking. Preliminary analyses indicate that the symbiotic partnership will perform intellectual operations much more effectively than man alone can perform them. Prerequisites for the achievement of the effective, cooperative association include developments in computer time sharing, in memory components, in memory organization, in programming languages, and in input and output equipment.

1 Introduction

1.1 Symbiosis

The fig tree is pollinated only by the insect Blastophaga grossorun. The larva of the insect lives in the ovary of the fig tree, and there it gets its food. The tree and the insect are thus heavily interdependent: the tree cannot reproduce wit bout the insect; the insect cannot eat wit bout the tree; together, they constitute not only a viable but a productive and thriving partnership. This cooperative “living together in intimate association, or even close union, of two dissimilar organisms” is called symbiosis [27].

“Man-computer symbiosis is a subclass of man-machine systems. There are many man-machine systems. At present, however, there are no man-computer symbioses. The purposes of this paper are to present the concept and, hopefully, to foster the development of man-computer symbiosis by analyzing some problems of interaction between men and computing machines, calling attention to applicable principles of man-machine engineering, and pointing out a few questions to which research answers are needed. The hope is that, in not too many years, human brains and computing machines will be coupled together very tightly, and that the resulting partnership will think as no human brain has ever thought and process data in a way not approached by the information-handling machines we know today.

1.2 Between “Mechanically Extended Man” and “Artificial Intelligence”

As a concept, man-computer symbiosis is different in an important way from what North [21] has called “mechanically extended man.” In the man-machine systems of the past, the human operator supplied the initiative, the direction, the integration, and the criterion. The mechanical parts of the systems were mere extensions, first of the human arm, then of the human eye. These systems certainly did not consist of “dissimilar organisms living together…” There was only one kind of organism-man-and the rest was there only to help him.

In one sense of course, any man-made system is intended to help man, to help a man or men outside the system. If we focus upon the human operator within the system, however, we see that, in some areas of technology, a fantastic change has taken place during the last few years. “Mechanical extension” has given way to replacement of men, to automation, and the men who remain are there more to help than to be helped. In some instances, particularly in large computer-centered information and control systems, the human operators are responsible mainly for functions that it proved infeasible to automate. Such systems (“humanly extended machines,” North might call them) are not symbiotic systems. They are “semi-automatic” systems, systems that started out to be fully automatic but fell short of the goal.

Man-computer symbiosis is probably not the ultimate paradigm for complex technological systems. It seems entirely possible that, in due course, electronic or chemical “machines” will outdo the human brain in most of the functions we now consider exclusively within its province. Even now, Gelernter’s IBM-704 program for proving theorems in plane geometry proceeds at about the same pace as Brooklyn high school students, and makes similar errors.[12] There are, in fact, several theorem-proving, problem-solving, chess-playing, and pattern-recognizing programs (too many for complete reference [1, 2, 5, 8, 11, 13, 17, 18, 19, 22, 23, 25]) capable of rivaling human intellectual performance in restricted areas; and Newell, Simon, and Shaw’s [20] “general problem solver” may remove some of the restrictions. In short, it seems worthwhile to avoid argument with (other) enthusiasts for artificial intelligence by conceding dominance in the distant future of cerebration to machines alone. There will nevertheless be a fairly long interim during which the main intellectual advances will be made by men and computers working together in intimate association. A multidisciplinary study group, examining future research and development problems of the Air Force, estimated that it would be 1980 before developments in artificial intelligence make it possible for machines alone to do much thinking or problem solving of military significance. That would leave, say, five years to develop man-computer symbiosis and 15 years to use it. The 15 may be 10 or 500, but those years should be intellectually the most creative and exciting in the history of mankind.

2 Aims of Man-Computer Symbiosis

Present-day computers are designed primarily to solve preformulated problems or to process data according to predetermined procedures. The course of the computation may be conditional upon results obtained during the computation, but all the alternatives must be foreseen in advance. (If an unforeseen alternative arises, the whole process comes to a halt and awaits the necessary extension of the program.) The requirement for preformulation or predetermination is sometimes no great disadvantage. It is often said that programming for a computing machine forces one to think clearly, that it disciplines the thought process. If the user can think his problem through in advance, symbiotic association with a computing machine is not necessary.

However, many problems that can be thought through in advance are very difficult to think through in advance. They would be easier to solve, and they could be solved faster, through an intuitively guided trial-and-error procedure in which the computer cooperated, turning up flaws in the reasoning or revealing unexpected turns in the solution. Other problems simply cannot be formulated without computing-machine aid. Poincare anticipated the frustration of an important group of would-be computer users when he said, “The question is not, ‘What is the answer?’ The question is, ‘What is the question?’” One of the main aims of man-computer symbiosis is to bring the computing machine effectively into the formulative parts of technical problems.

The other main aim is closely related. It is to bring computing machines effectively into processes of thinking that must go on in “real time,” time that moves too fast to permit using computers in conventional ways. Imagine trying, for example, to direct a battle with the aid of a computer on such a schedule as this. You formulate your problem today. Tomorrow you spend with a programmer. Next week the computer devotes 5 minutes to assembling your program and 47 seconds to calculating the answer to your problem. You get a sheet of paper 20 feet long, full of numbers that, instead of providing a final solution, only suggest a tactic that should be explored by simulation. Obviously, the battle would be over before the second step in its planning was begun. To think in interaction with a computer in the same way that you think with a colleague whose competence supplements your own will require much tighter coupling between man and machine than is suggested by the example and than is possible today.

3 Need for Computer Participation in Formulative and Real-Time Thinking

The preceding paragraphs tacitly made the assumption that, if they could be introduced effectively into the thought process, the functions that can be performed by data-processing machines would improve or facilitate thinking and problem solving in an important way. That assumption may require justification.

3.1 A Preliminary and Informal Time-and-Motion Analysis of Technical Thinking

Despite the fact that there is a voluminous literature on thinking and problem solving, including intensive case-history studies of the process of invention, I could find nothing comparable to a time-and-motion-study analysis of the mental work of a person engaged in a scientific or technical enterprise. In the spring and summer of 1957, therefore, I tried to keep track of what one moderately technical person actually did during the hours he regarded as devoted to work. Although I was aware of the inadequacy of the sampling, I served as my own subject.

It soon became apparent that the main thing I did was to keep records, and the project would have become an infinite regress if the keeping of records had been carried through in the detail envisaged in the initial plan. It was not. Nevertheless, I obtained a picture of my activities that gave me pause. Perhaps my spectrum is not typical–I hope it is not, but I fear it is.

About 85 per cent of my “thinking” time was spent getting into a position to think, to make a decision, to learn something I needed to know. Much more time went into finding or obtaining information than into digesting it. Hours went into the plotting of graphs, and other hours into instructing an assistant how to plot. When the graphs were finished, the relations were obvious at once, but the plotting had to be done in order to make them so. At one point, it was necessary to compare six experimental determinations of a function relating speech-intelligibility to speech-to-noise ratio. No two experimenters had used the same definition or measure of speech-to-noise ratio. Several hours of calculating were required to get the data into comparable form. When they were in comparable form, it took only a few seconds to determine what I needed to know.

Throughout the period I examined, in short, my “thinking” time was devoted mainly to activities that were essentially clerical or mechanical: searching, calculating, plotting, transforming, determining the logical or dynamic consequences of a set of assumptions or hypotheses, preparing the way for a decision or an insight. Moreover, my choices of what to attempt and what not to attempt were determined to an embarrassingly great extent by considerations of clerical feasibility, not intellectual capability.

The main suggestion conveyed by the findings just described is that the operations that fill most of the time allegedly devoted to technical thinking are operations that can be performed more effectively by machines than by men. Severe problems are posed by the fact that these operations have to be performed upon diverse variables and in unforeseen and continually changing sequences. If those problems can be solved in such a way as to create a symbiotic relation between a man and a fast information-retrieval and data-processing machine, however, it seems evident that the cooperative interaction would greatly improve the thinking process.

It may be appropriate to acknowledge, at this point, that we are using the term “computer” to cover a wide class of calculating, data-processing, and information-storage-and-retrieval machines. The capabilities of machines in this class are increasing almost daily. It is therefore hazardous to make general statements about capabilities of the class. Perhaps it is equally hazardous to make general statements about the capabilities of men. Nevertheless, certain genotypic differences in capability between men and computers do stand out, and they have a bearing on the nature of possible man-computer symbiosis and the potential value of achieving it.

As has been said in various ways, men are noisy, narrow-band devices, but their nervous systems have very many parallel and simultaneously active channels. Relative to men, computing machines are very fast and very accurate, but they are constrained to perform only one or a few elementary operations at a time. Men are flexible, capable of “programming themselves contingently” on the basis of newly received information. Computing machines are single-minded, constrained by their ” pre-programming.” Men naturally speak redundant languages organized around unitary objects and coherent actions and employing 20 to 60 elementary symbols. Computers “naturally” speak nonredundant languages, usually with only two elementary symbols and no inherent appreciation either of unitary objects or of coherent actions.

To be rigorously correct, those characterizations would have to include many qualifiers. Nevertheless, the picture of dissimilarity (and therefore p0tential supplementation) that they present is essentially valid. Computing machines can do readily, well, and rapidly many things that are difficult or impossible for man, and men can do readily and well, though not rapidly, many things that are difficult or impossible for computers. That suggests that a symbiotic cooperation, if successful in integrating the positive characteristics of men and computers, would be of great value. The differences in speed and in language, of course, pose difficulties that must be overcome.

4 Separable Functions of Men and Computers in the Anticipated Symbiotic Association

It seems likely that the contributions of human operators and equipment will blend together so completely in many operations that it will be difficult to separate them neatly in analysis. That would be the case it; in gathering data on which to base a decision, for example, both the man and the computer came up with relevant precedents from experience and if the computer then suggested a course of action that agreed with the man’s intuitive judgment. (In theorem-proving programs, computers find precedents in experience, and in the SAGE System, they suggest courses of action. The foregoing is not a far-fetched example. ) In other operations, however, the contributions of men and equipment will be to some extent separable.

Men will set the goals and supply the motivations, of course, at least in the early years. They will formulate hypotheses. They will ask questions. They will think of mechanisms, procedures, and models. They will remember that such-and-such a person did some possibly relevant work on a topic of interest back in 1947, or at any rate shortly after World War II, and they will have an idea in what journals it might have been published. In general, they will make approximate and fallible, but leading, contributions, and they will define criteria and serve as evaluators, judging the contributions of the equipment and guiding the general line of thought.

In addition, men will handle the very-low-probability situations when such situations do actually arise. (In current man-machine systems, that is one of the human operator’s most important functions. The sum of the probabilities of very-low-probability alternatives is often much too large to neglect. ) Men will fill in the gaps, either in the problem solution or in the computer program, when the computer has no mode or routine that is applicable in a particular circumstance.

The information-processing equipment, for its part, will convert hypotheses into testable models and then test the models against data (which the human operator may designate roughly and identify as relevant when the computer presents them for his approval). The equipment will answer questions. It will simulate the mechanisms and models, carry out the procedures, and display the results to the operator. It will transform data, plot graphs (“cutting the cake” in whatever way the human operator specifies, or in several alternative ways if the human operator is not sure what he wants). The equipment will interpolate, extrapolate, and transform. It will convert static equations or logical statements into dynamic models so the human operator can examine their behavior. In general, it will carry out the routinizable, clerical operations that fill the intervals between decisions.

In addition, the computer will serve as a statistical-inference, decision-theory, or game-theory machine to make elementary evaluations of suggested courses of action whenever there is enough basis to support a formal statistical analysis. Finally, it will do as much diagnosis, pattern-matching, and relevance-recognizing as it profitably can, but it will accept a clearly secondary status in those areas.

5 Prerequisites for Realization of Man-Computer Symbiosis

The data-processing equipment tacitly postulated in the preceding section is not available. The computer programs have not been written. There are in fact several hurdles that stand between the nonsymbiotic present and the anticipated symbiotic future. Let us examine some of them to see more clearly what is needed and what the chances are of achieving it.

5.1 Speed Mismatch Between Men and Computers

Any present-day large-scale computer is too fast and too costly for real-time cooperative thinking with one man. Clearly, for the sake of efficiency and economy, the computer must divide its time among many users. Timesharing systems are currently under active development. There are even arrangements to keep users from “clobbering” anything but their own personal programs.

It seems reasonable to envision, for a time 10 or 15 years hence, a “thinking center” that will incorporate the functions of present-day libraries together with anticipated advances in information storage and retrieval and the symbiotic functions suggested earlier in this paper. The picture readily enlarges itself into a network of such centers, connected to one another by wide-band communication lines and to individual users by leased-wire services. In such a system, the speed of the computers would be balanced, and the cost of the gigantic memories and the sophisticated programs would be divided by the number of users.

5.2 Memory Hardware Requirements

When we start to think of storing any appreciable fraction of a technical literature in computer memory, we run into billions of bits and, unless things change markedly, billions of dollars.

The first thing to face is that we shall not store all the technical and scientific papers in computer memory. We may store the parts that can be summarized most succinctly-the quantitative parts and the reference citations-but not the whole. Books are among the most beautifully engineered, and human-engineered, components in existence, and they will continue to be functionally important within the context of man-computer symbiosis. (Hopefully, the computer will expedite the finding, delivering, and returning of books.)

The second point is that a very important section of memory will be permanent: part indelible memory and part published memory. The computer will be able to write once into indelible memory, and then read back indefinitely, but the computer will not be able to erase indelible memory. (It may also over-write, turning all the 0’s into l’s, as though marking over what was written earlier.) Published memory will be “read-only” memory. It will be introduced into the computer already structured. The computer will be able to refer to it repeatedly, but not to change it. These types of memory will become more and more important as computers grow larger. They can be made more compact than core, thin-film, or even tape memory, and they will be much less expensive. The main engineering problems will concern selection circuitry.

In so far as other aspects of memory requirement are concerned, we may count upon the continuing development of ordinary scientific and business computing machines There is some prospect that memory elements will become as fast as processing (logic) elements. That development would have a revolutionary effect upon the design of computers.

5.3 Memory Organization Requirements

Implicit in the idea of man-computer symbiosis are the requirements that information be retrievable both by name and by pattern and that it be accessible through procedure much faster than serial search. At least half of the problem of memory organization appears to reside in the storage procedure. Most of the remainder seems to be wrapped up in the problem of pattern recognition within the storage mechanism or medium. Detailed discussion of these problems is beyond the present scope. However, a brief outline of one promising idea, “trie memory,” may serve to indicate the general nature of anticipated developments.

Trie memory is so called by its originator, Fredkin [10], because it is designed to facilitate retrieval of information and because the branching storage structure, when developed, resembles a tree. Most common memory systems store functions of arguments at locations designated by the arguments. (In one sense, they do not store the arguments at all. In another and more realistic sense, they store all the possible arguments in the framework structure of the memory.) The trie memory system, on the other hand, stores both the functions and the arguments. The argument is introduced into the memory first, one character at a time, starting at a standard initial register. Each argument register has one cell for each character of the ensemble (e.g., two for information encoded in binary form) and each character cell has within it storage space for the address of the next register. The argument is stored by writing a series of addresses, each one of which tells where to find the next. At the end of the argument is a special “end-of-argument” marker. Then follow directions to the function, which is stored in one or another of several ways, either further trie structure or “list structure” often being most effective.

The trie memory scheme is inefficient for small memories, but it becomes increasingly efficient in using available storage space as memory size increases. The attractive features of the scheme are these: 1) The retrieval process is extremely simple. Given the argument, enter the standard initial register with the first character, and pick up the address of the second. Then go to the second register, and pick up the address of the third, etc. 2) If two arguments have initial characters in common, they use the same storage space for those characters. 3) The lengths of the arguments need not be the same, and need not be specified in advance. 4) No room in storage is reserved for or used by any argument until it is actually stored. The trie structure is created as the items are introduced into the memory. 5) A function can be used as an argument for another function, and that function as an argument for the next. Thus, for example, by entering with the argument, “matrix multiplication,” one might retrieve the entire program for performing a matrix multiplication on the computer. 6) By examining the storage at a given level, one can determine what thus-far similar items have been stored. For example, if there is no citation for Egan, J. P., it is but a step or two backward to pick up the trail of Egan, James … .

The properties just described do not include all the desired ones, but they bring computer storage into resonance with human operators and their predilection to designate things by naming or pointing.

5.4 The Language Problem

The basic dissimilarity between human languages and computer languages may be the most serious obstacle to true symbiosis. It is reassuring, however, to note what great strides have already been made, through interpretive programs and particularly through assembly or compiling programs such as FORTRAN, to adapt computers to human language forms. The “Information Processing Language” of Shaw, Newell, Simon, and Ellis [24] represents another line of rapprochement. And, in ALGOL and related systems, men are proving their flexibility by adopting standard formulas of representation and expression that are readily translatable into machine language.

For the purposes of real-time cooperation between men and computers, it will be necessary, however, to make use of an additional and rather different principle of communication and control. The idea may be highlighted by comparing instructions ordinarily addressed to intelligent human beings with instructions ordinarily used with computers. The latter specify precisely the individual steps to take and the sequence in which to take them. The former present or imply something about incentive or motivation, and they supply a criterion by which the human executor of the instructions will know when he has accomplished his task. In short: instructions directed to computers specify courses; instructions-directed to human beings specify goals.

Men appear to think more naturally and easily in terms of goals than in terms of courses. True, they usually know something about directions in which to travel or lines along which to work, but few start out with precisely formulated itineraries. Who, for example, would depart from Boston for Los Angeles with a detailed specification of the route? Instead, to paraphrase Wiener, men bound for Los Angeles try continually to decrease the amount by which they are not yet in the smog.

Computer instruction through specification of goals is being approached along two paths. The first involves problem-solving, hill-climbing, self-organizing programs. The second involves real-time concatenation of preprogrammed segments and closed subroutines which the human operator can designate and call into action simply by name.

Along the first of these paths, there has been promising exploratory work. It is clear that, working within the loose constraints of predetermined strategies, computers will in due course be able to devise and simplify their own procedures for achieving stated goals. Thus far, the achievements have not been substantively important; they have constituted only “demonstration in principle.” Nevertheless, the implications are far-reaching.

Although the second path is simpler and apparently capable of earlier realization, it has been relatively neglected. Fredkin’s trie memory provides a promising paradigm. We may in due course see a serious effort to develop computer programs that can be connected together like the words and phrases of speech to do whatever computation or control is required at the moment. The consideration that holds back such an effort, apparently, is that the effort would produce nothing that would be of great value in the context of existing computers. It would be unrewarding to develop the language before there are any computing machines capable of responding meaningfully to it.

5.5 Input and Output Equipment

The department of data processing that seems least advanced, in so far as the requirements of man-computer symbiosis are concerned, is the one that deals with input and output equipment or, as it is seen from the human operator’s point of view, displays and controls. Immediately after saying that, it is essential to make qualifying comments, because the engineering of equipment for high-speed introduction and extraction of information has been excellent, and because some very sophisticated display and control techniques have been developed in such research laboratories as the Lincoln Laboratory. By and large, in generally available computers, however, there is almost no provision for any more effective, immediate man-machine communication than can be achieved with an electric typewriter.

Displays seem to be in a somewhat better state than controls. Many computers plot graphs on oscilloscope screens, and a few take advantage of the remarkable capabilities, graphical and symbolic, of the charactron display tube. Nowhere, to my knowledge, however, is there anything approaching the flexibility and convenience of the pencil and doodle pad or the chalk and blackboard used by men in technical discussion.

1) Desk-Surface Display and Control: Certainly, for effective man-computer interaction, it will be necessary for the man and the computer to draw graphs and pictures and to write notes and equations to each other on the same display surface. The man should be able to present a function to the computer, in a rough but rapid fashion, by drawing a graph. The computer should read the man’s writing, perhaps on the condition that it be in clear block capitals, and it should immediately post, at the location of each hand-drawn symbol, the corresponding character as interpreted and put into precise type-face. With such an input-output device, the operator would quickly learn to write or print in a manner legible to the machine. He could compose instructions and subroutines, set them into proper format, and check them over before introducing them finally into the computer’s main memory. He could even define new symbols, as Gilmore and Savell [14] have done at the Lincoln Laboratory, and present them directly to the computer. He could sketch out the format of a table roughly and let the computer shape it up with precision. He could correct the computer’s data, instruct the machine via flow diagrams, and in general interact with it very much as he would with another engineer, except that the “other engineer” would be a precise draftsman, a lightning calculator, a mnemonic wizard, and many other valuable partners all in one.

2) Computer-Posted Wall Display: In some technological systems, several men share responsibility for controlling vehicles whose behaviors interact. Some information must be presented simultaneously to all the men, preferably on a common grid, to coordinate their actions. Other information is of relevance only to one or two operators. There would be only a confusion of uninterpretable clutter if all the information were presented on one display to all of them. The information must be posted by a computer, since manual plotting is too slow to keep it up to date.

The problem just outlined is even now a critical one, and it seems certain to become more and more critical as time goes by. Several designers are convinced that displays with the desired characteristics can be constructed with the aid of flashing lights and time-sharing viewing screens based on the light-valve principle.

The large display should be supplemented, according to most of those who have thought about the problem, by individual display-control units. The latter would permit the operators to modify the wall display without leaving their locations. For some purposes, it would be desirable for the operators to be able to communicate with the computer through the supplementary displays and perhaps even through the wall display. At least one scheme for providing such communication seems feasible.

The large wall display and its associated system are relevant, of course, to symbiotic cooperation between a computer and a team of men. Laboratory experiments have indicated repeatedly that informal, parallel arrangements of operators, coordinating their activities through reference to a large situation display, have important advantages over the arrangement, more widely used, that locates the operators at individual consoles and attempts to correlate their actions through the agency of a computer. This is one of several operator-team problems in need of careful study.

3) Automatic Speech Production and Recognition: How desirable and how feasible is speech communication between human operators and computing machines? That compound question is asked whenever sophisticated data-processing systems are discussed. Engineers who work and live with computers take a conservative attitude toward the desirability. Engineers who have had experience in the field of automatic speech recognition take a conservative attitude toward the feasibility. Yet there is continuing interest in the idea of talking with computing machines. In large part, the interest stems from realization that one can hardly take a military commander or a corporation president away from his work to teach him to type. If computing machines are ever to be used directly by top-level decision makers, it may be worthwhile to provide communication via the most natural means, even at considerable cost.

Preliminary analysis of his problems and time scales suggests that a corporation president would be interested in a symbiotic association with a computer only as an avocation. Business situations usually move slowly enough that there is time for briefings and conferences. It seems reasonable, therefore, for computer specialists to be the ones who interact directly with computers in business offices.

The military commander, on the other hand, faces a greater probability of having to make critical decisions in short intervals of time. It is easy to overdramatize the notion of the ten-minute war, but it would be dangerous to count on having more than ten minutes in which to make a critical decision. As military system ground environments and control centers grow in capability and complexity, therefore, a real requirement for automatic speech production and recognition in computers seems likely to develop. Certainly, if the equipment were already developed, reliable, and available, it would be used.

In so far as feasibility is concerned, speech production poses less severe problems of a technical nature than does automatic recognition of speech sounds. A commercial electronic digital voltmeter now reads aloud its indications, digit by digit. For eight or ten years, at the Bell Telephone Laboratories, the Royal Institute of Technology (Stockholm), the Signals Research and Development Establishment (Christchurch), the Haskins Laboratory, and the Massachusetts Institute of Technology, Dunn [6], Fant [7], Lawrence [15], Cooper [3], Stevens [26], and their co-workers, have demonstrated successive generations of intelligible automatic talkers. Recent work at the Haskins Laboratory has led to the development of a digital code, suitable for use by computing machines, that makes an automatic voice utter intelligible connected discourse [16].

The feasibility of automatic speech recognition depends heavily upon the size of the vocabulary of words to be recognized and upon the diversity of talkers and accents with which it must work. Ninety-eight per cent correct recognition of naturally spoken decimal digits was demonstrated several years ago at the Bell Telephone Laboratories and at the Lincoln Laboratory [4], [9]. To go a step up the scale of vocabulary size, we may say that an automatic recognizer of clearly spoken alpha-numerical characters can almost surely be developed now on the basis of existing knowledge. Since untrained operators can read at least as rapidly as trained ones can type, such a device would be a convenient tool in almost any computer installation.

For real-time interaction on a truly symbiotic level, however, a vocabulary of about 2000 words, e.g., 1000 words of something like basic English and 1000 technical terms, would probably be required. That constitutes a challenging problem. In the consensus of acousticians and linguists, construction of a recognizer of 2000 words cannot be accomplished now. However, there are several organizations that would happily undertake to develop an automatic recognize for such a vocabulary on a five-year basis. They would stipulate that the speech be clear speech, dictation style, without unusual accent.

Although detailed discussion of techniques of automatic speech recognition is beyond the present scope, it is fitting to note that computing machines are playing a dominant role in the development of automatic speech recognizers. They have contributed the impetus that accounts for the present optimism, or rather for the optimism presently found in some quarters. Two or three years ago, it appeared that automatic recognition of sizeable vocabularies would not be achieved for ten or fifteen years; that it would have to await much further, gradual accumulation of knowledge of acoustic, phonetic, linguistic, and psychological processes in speech communication. Now, however, many see a prospect of accelerating the acquisition of that knowledge with the aid of computer processing of speech signals, and not a few workers have the feeling that sophisticated computer programs will be able to perform well as speech-pattern recognizes even without the aid of much substantive knowledge of speech signals and processes. Putting those two considerations together brings the estimate of the time required to achieve practically significant speech recognition down to perhaps five years, the five years just mentioned.

References

[1] A. Bernstein and M. deV. Roberts, “Computer versus chess-player,” Scientific American, vol. 198, pp. 96-98; June, 1958.

[2] W. W. Bledsoe and I. Browning, “Pattern Recognition and Reading by Machine,” presented at the Eastern Joint Computer Conf, Boston, Mass., December, 1959.

[3] F. S. Cooper, et al., “Some experiments on the perception of synthetic speech sounds,” J. Acoust Soc. Amer., vol.24, pp.597-606; November, 1952.

[4] K. H. Davis, R. Biddulph, and S. Balashek, “Automatic recognition of spoken digits,” in W. Jackson, Communication Theory, Butterworths Scientific Publications, London, Eng., pp. 433-441; 1953.

[5] G. P. Dinneen, “Programming pattern recognition,” Proc. WJCC, pp. 94-100; March, 1955.

[6] H. K. Dunn, “The calculation of vowel resonances, and an electrical vocal tract,” J. Acoust Soc. Amer., vol. 22, pp.740-753; November, 1950.

[7] G. Fant, “On the Acoustics of Speech,” paper presented at the Third Internatl. Congress on Acoustics, Stuttgart, Ger.; September, 1959.

[8] B. G. Farley and W. A. Clark, “Simulation of self-organizing systems by digital computers.” IRE Trans. on Information Theory, vol. IT-4, pp.76-84; September, 1954

[9] J. W. Forgie and C. D. Forgie, “Results obtained from a vowel recognition computer program,” J. Acoust Soc. Amer., vol. 31, pp. 1480-1489; November, 1959

[10] E. Fredkin, “Trie memory,” Communications of the ACM, Sept. 1960, pp. 490-499

[11] R. M. Friedberg, “A learning machine: Part I,” IBM J. Res. & Dev., vol.2, pp.2-13; January, 1958.

[12] H. Gelernter, “Realization of a Geometry Theorem Proving Machine.” Unesco, NS, ICIP, 1.6.6, Internatl. Conf. on Information Processing, Paris, France; June, 1959.

[13] P. C. Gilmore, “A Program for the Production of Proofs for Theorems Derivable Within the First Order Predicate Calculus from Axioms,” Unesco, NS, ICIP, 1.6.14, Internatl. Conf. on Information Processing, Paris, France; June, 1959.

[14] J. T. Gilmore and R. E. Savell, “The Lincoln Writer,” Lincoln Laboratory, M. I. T., Lexington, Mass., Rept. 51-8; October, 1959.

[15] W. Lawrence, et al., “Methods and Purposes of Speech Synthesis,” Signals Res. and Dev. Estab., Ministry of Supply, Christchurch, Hants, England, Rept. 56/1457; March, 1956.

[16] A. M. Liberman, F. Ingemann, L. Lisker, P. Delattre, and F. S. Cooper, “Minimal rules for synthesizing speech,” J. Acoust Soc. Amer., vol. 31, pp. 1490-1499; November, 1959.

[17] A. Newell, “The chess machine: an example of dealing with a complex task by adaptation,” Proc. WJCC, pp. 101-108; March, 1955.

[18] A. Newell and J. C. Shaw, “Programming the logic theory machine.” Proc. WJCC, pp. 230-240; March, 1957.

[19] A. Newell, J. C. Shaw, and H. A. Simon, “Chess-playing programs and the problem of complexity,” IBM J. Res & Dev., vol.2, pp. 320-33.5; October, 1958.

[20] A. Newell, H. A. Simon, and J. C. Shaw, “Report on a general problem-solving program,” Unesco, NS, ICIP, 1.6.8, Internatl. Conf. on Information Processing, Paris, France; June, 1959.

[21] J. D. North, “The rational behavior of mechanically extended man”, Boulton Paul Aircraft Ltd., Wolverhampton, Eng.; September, 1954.

[22] 0. G. Selfridge, “Pandemonium, a paradigm for learning,” Proc. Symp. Mechanisation of Thought Processes, Natl. Physical Lab., Teddington, Eng.; November, 1958.

[23] C. E. Shannon, “Programming a computer for playing chess,” Phil. Mag., vol.41, pp.256-75; March, 1950.

[24] J. C. Shaw, A. Newell, H. A. Simon, and T. O. Ellis, “A command structure for complex information processing,” Proc. WJCC, pp. 119-128; May, 1958.

[25] H. Sherman, “A Quasi-Topological Method for Recognition of Line Patterns,” Unesco, NS, ICIP, H.L.5, Internatl. Conf. on Information Processing, Paris, France; June, 1959

[26] K. N. Stevens, S. Kasowski, and C. G. Fant, “Electric analog of the vocal tract,” J. Acoust. Soc. Amer., vol. 25, pp. 734-742; July, 1953.

[27] Webster’s New International Dictionary, 2nd e., G. and C. Merriam Co., Springfield, Mass., p. 2555; 1958.

Internet Traffic

The Cisco Visual Networking Index (VNI) is the company’s ongoing effort to forecast and analyze the growth and use of IP networks worldwide.

Networks are an essential part of business, education, government, and home communications. Many residential, business, and mobile IP networking trends are being driven largely by a combination of video, social networking and advanced collaboration applications, termed “visual networking.”

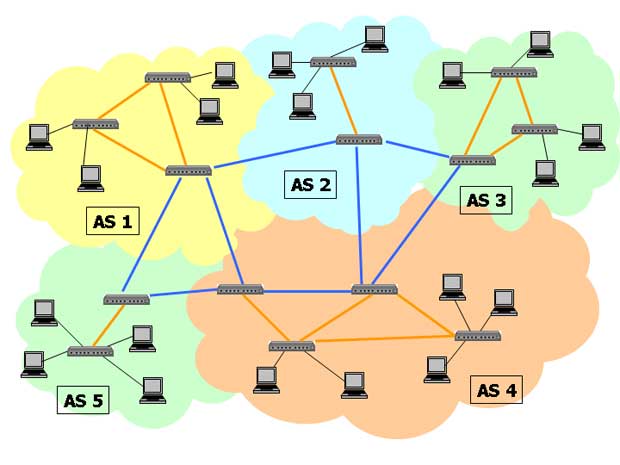

Internet Topology

The delivery of IP traffic through the Internet depends on the complex interactions between thousands of autonomous systems (AS) that exchange routing information using the Border Gateway Protocol (BGP) and present a common, clearly defined routing policy to the Internet.

Autonomous System Number‘s (ASN) are assigned in blocks by the Internet Assigned Numbers Authority (IANA) to Regional Internet Registries (RIR) which allocate them to each AS. An AS typically falls under the administrative control of a single institution, such as a university, company, or Internet Service Provider (ISP), but multiple organizations can also run BGP using the same AS numbers.

Autonomous systems are segmented in three groups :

- Multi-homed AS : has connections to more than one other AS

- Stub AS (single-homed) : is connected to only one other AS (private interconnections or peering, not reflected in public route-view servers, is however possible)

- Transit AS : provides connections through itself to other AS

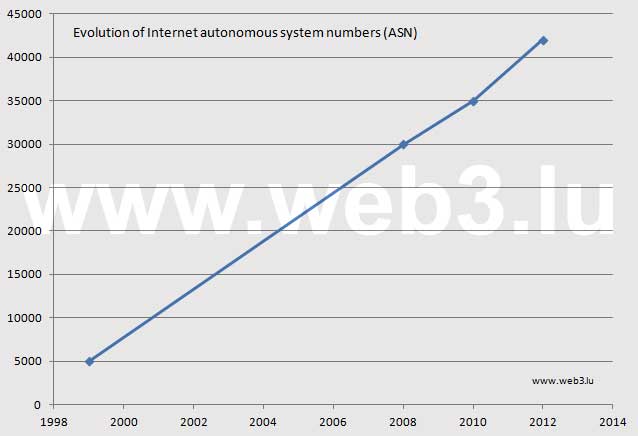

The evolution of the AS numbers is shown hereafter :

Evolution of Internet autonomous system numbers (ASN)

A lookup public registry, called Routing Assets Database (RADb), or Routing Arbiter Database, designed to make fundamental information about networks available, is run by Merit Network Inc. It was developed in the early 1990s as part of the NSF-funded Routing Arbiter Project. Merit offers also a freely available, stand-alone Internet Routing Registry database server (IRRd) supporting the RPSL and RPSLng (Routing Policy Specification Language) standards.

Internet meshed autonomous systems

The topology of early networks was a simple star or tree structure. In the early Internet there was one Commercial Internet eXchange (CIX), two Federal Internet Exchanges (FIX) and four Network Access Points (NAP). Today the topology of the Internet is a huge mesh structure with millions of switches, routers, proxies, peering points and other devices which form the following network elements :

- Internet exchange point (IX or IXP) : to exchange Internet traffic between autonomous systems

- Content Delivery Network (CDN) : to serve content to end-users with high availability and high performance

- Tier-1 Network : a transit-free network that peers with every other Tier-1 network

- Tier-2 Network : a network that peers with other networks, but still purchases IP Transit to reach other portions of the Internet

- Tier-3 Network : a network that solely purchases IP Transit from other networks

The Internet backbone refers to the main data routes between core routers and large, strategically interconnected networks in the Internet, but there is no precise definition. Network security is an important topic in the Internet community. The Internet Society is actively engaged in all activities concerning routing security.

The following list provides some useful links to tools and further informations about the Internet Topology :

- The Internet Map, by Ruslan Enikeev

- Reverse Traceroute and Looking Glass Servers in the World, by CAIDA (The Cooperative Association for Internet Data Analysis)

- IPv4 and IPv6 BGP Looking Glasses at BGP4.as

- Teralink Looking Glass, by P&T Luxembourg

Housing, sweet housing

paperJam.lu – 9 juillet 2002 – par Jean-Michel Gaudron

Un site Internet, pour une entreprise, ça prend de la place. Un hébergement externe constitue généralement une solution naturelle.

La nécessité, pour une entreprise, de disposer d’un site Internet n’échappe plus, aujourd’hui, à grand monde. Une récente étude menée par Mindforest, présentée en mars dernier, recensait, pour le seul Luxembourg, environ 2.400 sites web opérationnels, soit un taux global d’environ 11% qui peut sembler modeste, mais qu’il convient de relativiser en fonction de la taille des entreprises, les trois quarts de celles employant plus de 250 personnes disposant d’une présence active sur Internet (et 61% des structures de plus de 90 salariés).

“Globalement, il y a une prise de conscience qu’Internet a désormais une place naturelle dans les habitudes des gens et que c’est devenu un élément propre de promotion indispensable. L’approche est plus mature, de nos jours, que ce qu’elle a pu être du temps des ‘pionniers’. Il n’empêche que beaucoup d’entreprises ont encore une certaine crainte de l’outil Internet, et si elles comprennent qu’elles doivent y être présentes, elles n’ont pas nécessairement encore compris ni pourquoi, ni le profit qu’elles peuvent vraiment en tirer” constate néanmoins Nic Nickels, Directeur d’Espace Net, société spécialisée notamment dans les applications “content management” faciles d’utilisation, qu’elle fut la première à mettre en place à Luxembourg.

Qu’il soit statique, ou bien très dynamique, et à plus forte raison dans ce dernier cas, derrière de plus ou moins jolies pages s’affichant sur un écran se cache toute une infrastructure que les entreprises n’ont pas forcément le temps, les moyens ou tout simplement l’envie (et souvent il s’agit d’un savant dosage de ces trois raisons) de prendre en mains.

Hormis le cas particulier des banques qui, pour des raisons évidentes de sécurité et de confidentialité, se doivent d’abriter leurs serveurs de données dans des locaux qui leur sont propres, bon nombre d’entreprises fait donc appel à des hébergeurs qui, bien souvent, sont les sociétés informatiques qui conçoivent et développent les sites.

“Et encore, pour ce qui est des banques, on peut leur proposer de placer leurs matériels dans des locaux qui leur sont attribués à eux exclusivement, et dont eux seuls ont accès, de sorte qu’ils puissent tout de même se décharger de cette contrainte en dehors de leurs propres bâtiments” explique Xavier Buck, General Manager de la toute jeune société Datacenter Luxembourg, qui s’est spécialisée dans les services Internet et le hosting professionnel autant que dans le business continuity et recovery, et qui fournit aujourd’hui des solutions globales y compris à destination de la plupart des principaux providers Internet luxembourgeois. “Nous partons du principe qu’en matière d’hébergement, les sociétés ont besoin de solutions globales, aussi bien pour assurer de bonnes connexions entre les filiales d’un groupe, que pour ce qui est des relations avec les fournisseurs ou les clients. Il y a donc un besoin de serveurs centralisés de données, auquel nous répondons”.

Les activités de Datacenter vont donc bien au-delà du seul hébergement de sites Internet, et celui-ci ne constitue d’ailleurs plus nécessairement une cible privilégiée: les 500 m2 de leur salle informatique sont déjà bien remplis – mais pas encore saturés – par une centaine de serveurs auxquels la société permet un accès physique et sécurisé 24/24h et 7/7 jours.

Dédié ou partagé ?

Evidemment, en marge de cette ‘mega-infrastructure’, de plus en plus ouverte, du reste, vers des sociétés étrangères désireuses de prendre pied, par cette voie-là, au Luxembourg, le marché local ne manque pas de ressources pour ce qui est des solutions d’hébergement Internet.

Mais quel type de solution adopter? Pour Samuel Dickes, Internet manager chez Luxweb (filiale spécialisée d’Editus), cela dépend avant tout des besoins exprimés. “Pour une PME, par exemple, il sera important d’être en mesure de proposer un service complet : fabrication du site, statistiques, back up performant, vitesse et disponibilité. Car c’est très important aussi: vous pouvez disposer du meilleur site internet du monde, si l’internaute met trop de temps à pouvoir s’y connecter, il abandonnera rapidement !”

En matière de disponibilité, les providers luxembourgeois semblent, du reste, relativement bien armés. Luxweb, qui a récemment rapatrié ses serveurs initialement hébergés aux Etats-Unis, se base sur le backbone de 150 Mbps des P&T (dont il est d’ailleurs partie intégrante via Editus Luxembourg); Espace Net, pour sa part, dispose de deux lignes entrantes, une terrestre et une hertzienne, reliées à deux backbones différents, de manière à assurer une disponibilité proche du 100% même en cas d’incident sur une des deux lignes.

Ce souci de disponibilité optimale peut même être poussé encore plus loin, comme avec Web Technologies, qui dispose de son propre réseau Internet connecté à 3 bandes passantes: une ligne principale de 10Gbit/sec, qui relie Luxembourg à Francfort (un des plus importants points en Europe pour l’échange des données sur voies informatiques); une ligne qui relie son réseau avec le LIX (Luxembourg Internet Exchange) pour garantir une vitesse optimale dédiée à la communauté d’internautes au Luxembourg, et une ligne supplémentaire de backup de 10Gbit/sec, qui relie Luxembourg à Düsseldorf.

Les P&T, eux-mêmes, fournisseurs d’un des principaux backbones du pays, proposent évidemment ces services d’hébergement dans leur panoplie de services internet, sans que ce soit nécessairement une priorité, puisque Editus et Visual Online, deux de ses filiales, les comptent également dans leur offres. “Disons que nos trois services sont complémentaires commente Marco Barnig, Chef de service de l’Unité commerciale de la division des Télécommunications de l’Entreprise des P&T. “Visual Online se concentre plus sur le housing, les équipements, le hosting d’applications et se spécialise plus sur les applications commerciales, alors qu’Editus peut plus miser sur des activités connexes aux Pages Jaunes qu’elle gère également. Pour notre part, nous développons l’hébergement de nouvelles technologies du web et d’applications innovantes tels que les mondes virtuels, les animations en 3D, les jeux interactifs en ligne, etc’”

Une société qui souhaite faire héberger ses données sur des serveurs “outside” aura également le choix entre deux types d’utilisation: des serveurs dédiés, réservés à leur seul et usage unique, ou bien des serveurs partagés avec d’autres sites, ce qui revient évidemment moins cher, mais limite également l’espace disponible.

Typiquement, un hébergement partagé sera parfaitement adapté à des sites statiques, de type “carte de visite”, voire des sites dynamiques légers, ne nécessitant donc pas un besoin trop important d’espace. Sont en général mis à disposition du client l’accès à une base de données de type mySQL et la possibilité d’utiliser quelques langages de programmation libres comme PHP ou Perl. Le risque inhérent à cette formule peut résider dans une tentation trop grande pour un hébergeur mal intentionné de limiter exagérément les services d’assistance technique.

“Nous appliquons les recommandations techniques fournies par Sun, et nous ne dépassons pas 200 sites par machine. Ce qui ne nous empêche pas de ne dépasser que rarement les 10% de capacité d’exploitation du processeur” précise Samuel Dickes.

Plus cher, mais aussi, forcément, plus souple, le serveur dédié permet de mieux gérer un site plus complexe et nécessitant des besoins spécifiques. Puisque le site réside sur un seul serveur, il peut donc en exploiter la totalité de ses ressources, pour une stabilité forcément accrue et permet également l’utilisation de logiciels et solutions propres.

Tarification à géométrie variable

Qui dit stratégies différentes dit, également, tarifications différentes. En mettant de côté ce que M. Dickes appelle “les hébergeurs très bas de gamme, qui ne jouent que sur les volumes de sites hébergés pour proposer des formules basiques à 5 dollars par mois”, la gamme de prix est assez large.

“Cela dépendra aussi du travail éventuel de développement du site” précise Nic Nickels: Espace Net revendique quelque 200 sites hébergés, pratiquement tous créés par ses propres soins. Il y aura donc deux niveaux de coûts pour le client, selon la sophistication de l’habillage graphique du site qui nécessitera alors plus de travail graphique. “Disons que pour un site ‘banal’, avec du texte et quelques photos, plus du contenu, avec une bonne bande passante, pour 500 Euro, on peut déjà avoir quelque chose de très correct. Ensuite, nous proposons des forfaits mensuels, à partir de 25 Euro par mois, tout comme si nous louions des applications: le client paye juste ce dont il a besoin, et on peut ajouter ou enlever des options à volonté” explique M. Nickels, pour qui la clef d’une “bonne” activité d’hébergement réside dans la capacité à laisser un maximum de liberté au client. “Et même pour ceux qui nous confient la mise à jour de leur contenu, nous travaillons avec des interfaces très souples et rapides, de sorte que la facture, au final, n’est pas très salée”.

Web Technologies, qui compte pas loin de 500 sites “commerciaux” (sociétés et asbl) propose, pour sa part, des formules “pro” à partir de 415 Euro TTC par an, incluant notamment 100 MB d’espace, des POP3 et adresses e-mail alias illimitées.

100 Mb d’espace, c’est également l’offre de base d’Editus qui, elle aussi, a développé 80% des 600 sites commerciaux qu’elle héberge, avec une offre à partir de 300 Euro par an, qui pourrait légèrement être revue à la hausse à la rentrée, en raison de l’ajout de services supplémentaires liés à la sécurité: un scan anti-virus, au niveau de leurs propres serveurs, des e-mails entrants, de sorte que le client final n’ait pratiquement plus de souci de cet ordre.

“Il ne faut pas s’attendre à de fortes évolutions de prix dans les temps à venir, ni à la hausse ni à la baisse, pas plus qu’il n’y en a eu au cours de ces deux dernières années. Et si ces prix doivent augmenter, c’est essentiellement parce que les services associés augmentent. Rien que pour un serveur back up, il faut tabler sur un demi million d’Euro” explique M. Dickes.

Des sociétés comme Luxembourg Online, qui se présente comme le plus grand fournisseur d’accès à Internet indépendant au Luxembourg, ou bien encore les P&T, affichent, pour leur part, des tarifs un peu plus élevés, justifiés par une qualité de service en rapport: 115 Euro mensuels pour le “Package Gold’ de Luxembourg Online (pour 50 Mb d’espace disque, établissement du nom de domaine compris), ou bien 138 Euro pour les P&T (pour 50 Mb également, hors nom de domaine). “Nous n’avons certainement pas les prix les plus bas du marché, mais le rapport qualité-prix est largement compétitif” estime ainsi Marco Barnig.

EuroDNS: les noms de domaine vus par Xavier Buck (Datacenter)

Qui dit site Internet dit nom de domaine. Administrativement parlant, la réservation desnoms de domaines est parfois complexe, surtout dès que l’on souhaite sortir des frontières. C’est pourquoi Datacenter a choisi d’offrir une solution globale et facile d’utilisation, sous la forme du site www.eurodns.lu. Il y est possible de vérifier si un nom de domaine existe déjà sous n’importe quelle extension (.lu, .com; .net, .biz, etc?), le cas échéant de consulter les informations sur les propriétaires des domaines réservés, et enfin de réserver le nom de domaine désiré. Il en coûte entre 0 et 90 Euro pour le droit d’accès à un nom de domaine, puis entre 15 et 70 Euro pour le renouvellement annuel.

“Nous avons créé le site en février, et en l’espace de quatre mois, nous avons enregistré plus de 2.000 réservations de noms de domaines. Du reste, la structure prend un tel essor que nous envisageons un spin-off pour en faire une société indépendante”.

Pour le Luxembourg, ces frais, établis par Restena, s’élèvent respectivement à 50 Euro et 40 Euro, ce qui place tout de même le pays dans la fourchette “haute” des tarifs de réservation de noms de domaines. “On a par ailleurs beaucoup de mal à travailler avec eux regrette M. Buck. Du reste, cette tarification élevée est très regrettable, car elle rejaillit immanquablement sur beaucoup d’autres aspects”.

Au 30 avril 2002, 15.067 noms de domaines .lu étaient recensés auprès de Restena. Sur un plan mondial, les statistiques fournies par le site www.eurodns font état, au 30 mai 2002, d’un nombre total de noms de domaines à travers le monde de 30,29 millions, dont 23,11 millions disposant de l’extension .com.

Eurodns offre également la possibilité de faire une pré-réservation d’un site avec l’extension .eu, adopté par le Conseil des télécommunications européen le 25 mars dernier. “Nous sommes d’ailleurs en lice, dans le cadre du consortium EUDR (European Domain Registry) pour l’obtention de la gestion complète du nom de domaine .eu: il s’agit d’une soumission pour laquelle la Commission rendra son verdict à la rentrée. Ce n’est pas une question d’ordre commercial, puisque la structure retenue sera une asbl. Mais en termes de projet et de notoriété, ce serait énorme”.

Pratique: où s’héberger à Luxembourg?

Voici une liste non exhaustive de certaines sociétés actives en matière d’hébergement de sites internet et de serveurs à Luxembourg. Informations complémentaires sur notre Index en ligne du site www.paperjam.lu.

Accessit Luxembourg s.à r.l.

24, rue Beaumont

L-1219 Luxembourg

Tel.: 26 20 29 99; Fax: 26 34 08 25

www.luxauto.lu; www.luxdomain.lu; www.menu.lu; www.agenda.lu

ArianeSoft S.A.

24-26, rue de la Gare (Galerie Kons)

L-1616 Luxembourg

Tel.: 26 29 50-1; Fax: 26 29 50 50

arianesoft.com

Cegecom S.A.

3, rue Jean Piret, B.P. 2708

L-1027 Luxembourg

Tel.: 26 49 91; Fax: 26 49 96 99

www.cegecom.lu

Datacenter Luxembourg S.A.

68-70, bd de la Pétrusse

L-2320 Luxembourg

Tel.: 26 19 16 – 1; Fax: 26 20 29 96

www.dclux.com; www.datacenter.lu

Editus Luxembourg S.A.

45, rue Glesener

L-1631 Luxembourg

Tel.: 49 60 51-1; Fax: 49 60 56

www.annuaire.lu; www.luxweb.lu; www.editus.lu

Espace Net s.à r.l.

15, route d’Esch

L-1470 Luxembourg

Tel.: 25 32 32 1; Fax: 25 32 32 34 3

www.espace-net.lu

Equant S.A.

(Anciennement: Global One Communications)

201, route de Thionville

(B.P. 09, L-5801 Hesperange)

Tel.: 27 30 11; Fax: 27 30 13 01

www.equant.com

Global Media Systems S.A.

2, rue Wilson

L-2732 Luxembourg

Tel.: 48 28 11; Fax: 48 28 11 0

www.gms.lu

Intelligent-IP S.A.

7, rue Pletzer (Centre Helfent)

L-8080 Bertrange

Tel.: 264363-1; Fax: 264363-73

www.iip.lu

Luxembourg Online S.A.

14, avenue du X Septembre

L-2550 Luxembourg

Tel.: 45 25 64; Fax: 45 93 34

www.internet.lu; www.inc.lu

P&T Luxembourg

8a, Avenue Monterey

L-2020 Luxembourg

Tel.: 47 65 -1; Fax: 47 51 10

www.ept.lu

Primesphere S.A.

(Anciennement: Tecsys infopartners)

4, rue Jos Felten

L-1508 Howald

Tel.: 40 11 61; Fax: 40 11 64 00

www.primesphere.com

SurfBizzXact s.à r.l.

159, rue d’Esch

L-4380 Ehlerange

Tel.: 2650 0182; Fax: 2650 0183

www.surfbizzxact.com; www.kiwikom.com; www.reseller.lu

Tiscali Luxembourg S.A.

(Anciennement: WorldOnline)

25C, Boulevard Royal

L-2449 Luxembourg

Tel.: 26 26 07 1; Fax: 26 26 07 99

www.tiscali.lu

3LuX Internet Technologies

41, rue Fontaine

L-4122 Esch sur Alzette

Tel.: 54 46 37; Fax: 54 70 02

www.3lux.com

Visual Online S.A.

1 rue de Bitbourg, B.P. 2534

L-1025 Luxembourg

Tel.: 42 44 11-1; Fax: 42 44 11 44

www.vo.lu; www.connect.lu

Web Technologies S.A.

30, Val St André

L-1128 Luxembourg

Tel.: 26 25 77-1; Fax: 26 25 77 78

www.web.lu; www.webtechnologies.lu

Worldcom S.A.

4a-4b rue de l’Etang

L-5326 Contern

Tél.: 27 00 81 11; Fax: 27 00 81 00

www.worldcom.lu

Fathers of the Internet

J.C.R. Licklider

Vinton Cerf : 1943

- degree in Mathematics from Stanford University

- Systems Engineer at IBM (QUIKTRAN)

- 1968 – 1972 : University of California (UCLA), Los Angeles (from MS to PhD)

- 1969 : graduate student in Professor Leonard Kleinrock’s data packet networking group at UCLA (ARPANET)

- 1972 – 1976 : Assistant Professor at Stanford University ; DARPA scientist

- 1992 : Cofounder of the Internet Society

- 1982 – 1986 : Vice President at MCI Digital Information Services (MCI Mail)

- 1988 : Fellow of IEEE

- 1994 : Fellow of the Association for Computing Machinery

- 1996 : Award “SIGCOMM”

- 1996 : Award “Yuri Rubinsky Memorial”

- 1996 : Certificate of Merit from The Franklin Institute

- 1997 : Award “National Medal of Technology”

- 1999 – 2007 : Board Member of ICANN

- 2000 : Award “Living Legend Medal” from the Library of Congress

- 2000 : Fellow of the Computer History Museum

- 2000 : Fellow of the Association for Women in Science (AWIS)

- 2004 : Award “Tuning”

- 2005 : Award “Presidential Medal of Freedom”

- 2006 : Award “National Inventors Hall of Fame”

- 2006 : Honorary Fellow of the Society for Technical Communication (STC)

- 2008 : Award “Japan Prize”

- 2008 : Worshipful Company of Information Technologists; Freedom of the City of London

- 2008 : Honorary membership in the Yale Political Union

- 2010 : Commissioner for the Broadband Commission for Digital Development

- 2011 : Fellow of the Hasso Plattner Institute (HPI)

- 2011 : Distinguished Fellow of British Computer Society

Robert W. Taylor : 1932

- 1954 – 1964 : University of Texas at Austin (from undergraduate to master)

- 1961 – 1965 : Researcher and program manager at NASA

- 1965 – 1966 : Deputy to Ivan Sutherland at ARPA

- 1966 – 1969 : Director of ARPA’s Information Processing Techniques Office (IPTO)

- 1970 – 1983 : Founder and Manager of the Computer Science Laboratory at Xerox PARC

- 1984 – 1996 : Founder and Manager of Digital Equipment Corporation’s Systems Research Center

- 1999 : Award “National Medal of Technology”

- 2004 : Award “Draper Prize by the National Academy of Engineering”

Leonard Kleinrock

Tim Berner-Lee : 1955

- 1973 – 1976 : Studies at the The Queen’s College, Oxford

- 1976 – 1980 : Software engineer at Plessey Telecommunications and at D.G.Nash Ltd

- 1980 : Independent contractor at CERN

- 1980 – 1984 : Design Lead at John Poole’s Image Computer Systems

- 1984 – 1994 : Scientist at CERN

- 1989 : Proposal for an information management system (HTTP) at CERN

- 1991 : Public presentation of the CERN web server at Hypertext 91

- 1994 – 2004 : Professor at the Laboratory for Computer Science at the Massachusetts Institute of Technology (MIT)

- 1994 : Founder of W3C at MIT

- 1994 : Member of the World Wide Web Hall of Fame

- 1995 : Award “ACM Software System”

- 1995 : Award “Young Innovator of the Year” from the Kilby Foundation

- 2001 : Patron of the East Dorset Heritage Trust

- 2001 : Fellow of the American Academy of Arts and Sciences

- 2003 : Fellow Award of the Computer History Museum

- 2004 : Award “Millennium Technology Prize of Finland”

- 2004 : Professor in Computer Science at the School of Electronics and Computer Science, University of Southampton, England

- 2004 : Knight Commander of the Most Excellent Order of the British Empire

- 2007 : Award “Academy of Achievement’s Golden Plate”

- 2007 : Order of Merit

- 2008 :Award “IEEE/RSE Wolfson James Clerk Maxwell”

- 2009 : Foreign associate of the United States National Academy of Sciences

- 2009 : Award “Webby for Lifetime Achievement”

- 2011 : Award “Mikhail Gorbachev”

- 2011 : IEEE Intelligent Systems’ AI’s Hall of Fame

Ivan Edward Sutherland

Douglas C. Engelbart :1925

- 1948 : Electrical Engineering Studies at Oregon State College

- 1948 – 1951 : Ames Research Center, National Advisory Committee for Aeronautics (forerunner of NASA)

- 1952 – 1955 : University of California, Berkeley (from MS to PhD)

- 1956 : Assistant Professor at Berkeley

- 1957 – 1977 : Stanford Research Institute (SRI)

- 1962 : Report “Augmenting Human Intellect: A Conceptual Framework”

- 1963 : Creation of the Augmentation Research Center (ARC) at SRI

- 1967 – 1970 : patent for the computer mouse, licensed later to Apple

- 1968 : The Mother of all Demos : (NLS = oN-Line system)

- 1977 : SRI is sold to Tymshare

- 1978 : Closing of ARC for lack of funding

- 1989 : Founder of the Bootstrap Institute

- 1998 : Founder of the Doug Engelbart Institute

- 1995 : Award “Yuri Rubinsky Memorial”

- 1996 : Award “Franklin Institute’s Certificate of Merit”

- 1997 : Award “Lemelson-MIT Prize

- 1998 : Award “ACM SIGCHI Lifetime Achievement”

- 1999 : Award “Benjamin Franklin Medal”

- 2000 : Award “National Medal of Technology”

- 2001 : Award “British Computer Society’s Lovelace Medal”

- 2005 : Award “Norbert Wiener Award”; Fellow of the Computer History Museum

- 2005 : National Science Foundation grant to fund the open source HyperScope

- 2011 : IEEE Intelligent Systems’ AI’s Hall of Fame

photos

La saga du Cube Internet

conception et production

Forum OLAP

opq

restena.lu

mno

Luxweb

lmn